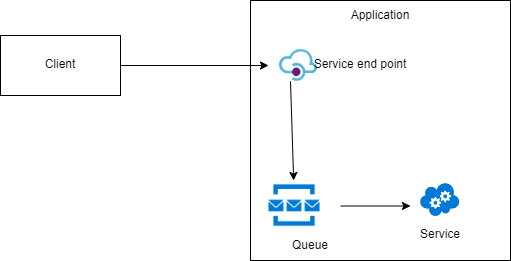

Queue Based Load Leveling pattern

Sudden increase in the number of requests on a service may result in performance and reliability problems. To mitigate this issue introduce a queue between the client and the service.

The client posts the message in the queue with the required data for the service to process. The service retrieves the message from the queue and processes them.

This decouples clients from the service and both can run asynchronously. Also this queue pattern will allow the client to accept requests even if the downstream services are down.

It is possible to scale up/down downstream services based on how many messages are waiting in the queue.

Points to consider before implementing this pattern

Queues are a one-way communication mechanism. If the client expects a response from the service we need to implement a proper mechanism for service to send the response.

Order of the message may not be guaranteed. Some solutions may require that messages are processed in a specific order.

Message processing logic in the service should be idempotent.

A poison message is one which cannot be processed by a receiving service. Proper mechanism should be in place to handle poison messages.

Ref:

https://docs.microsoft.com/en-us/previous-versions/msp-n-p/dn589781(v=pandp.10)

https://www.amazon.in/gp/product/B009G8PYY4/ref=dbs_a_def_rwt_bibl_vppi_i0