System Design : Designing API Rate Limiter

What is a Rate Limiter ?

Rate limiters restrict how many requests a sender (either a user or an IP address) can issue in a certain amount of time (e.g. 30 requests per minute).

Why Rate Limiting is Used ?

Avoid resource starvation as a result of Denial of Service attack.

Prevent servers from being overloaded

Some third party api’s are charged on a per call basis. Limiting the number of calls is essential to reduce costs

Algorithms for rate limiting

Here is the list of popular algorithms used to implement rate limiting.

- Token bucket

- Leaking bucket

- Fixed window counter

- Sliding window log

- Sliding window counter

Token bucket algorithm

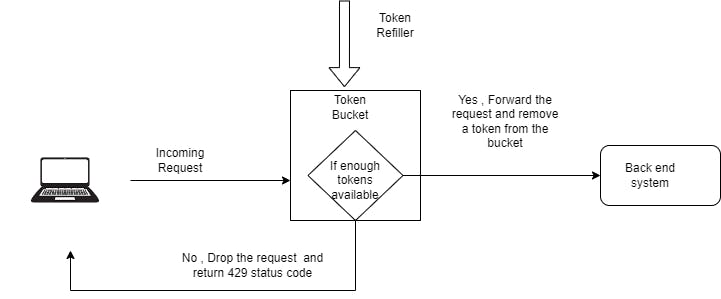

Each user would get a token bucket with predefined capacity. Tokens are added to the bucket by a refiller at a preset rate periodically. When the predefined capacity exceeds no new tokens are added.

For each incoming request we check if there are enough tokens in the users bucket. If there are enough tokens we would allow the request and remove one token from the bucket. If there are not enough tokens the requests are dropped.

Leaking bucket algorithm

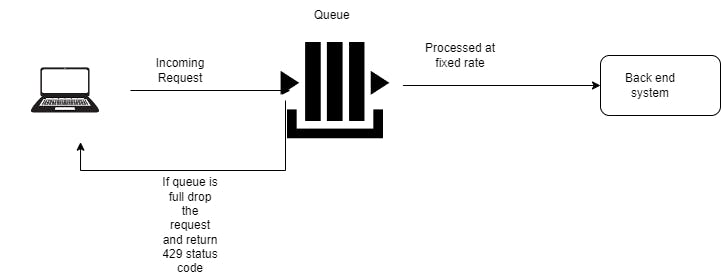

Leaking bucket algorithms process incoming requests at a fixed rate .It is usually implemented with a First-in-First-Out queue. All the incoming requests are added to a queue. If the queue is full the request is dropped. Requests are pulled from the queue and processed at fixed rate(eg : 3 request per second)

Fixed window counter algorithm

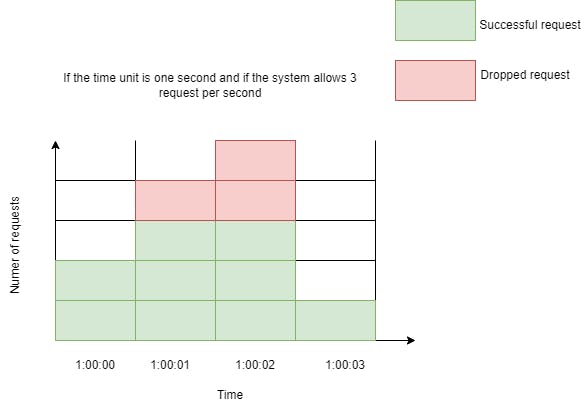

In this algorithm , we divide the time into a fixed window ( e.g. 5 secs) and allocate a counter for that window. Each incoming request increments the counter for the respective window. If the counter reaches the pre-defined threshold, new requests are dropped until a new time window starts.

This algorithm has the major drawback of causing too many requests exceeding the quota to be sent during a burst of traffic at the edges of time windows.

Sliding window log algorithm

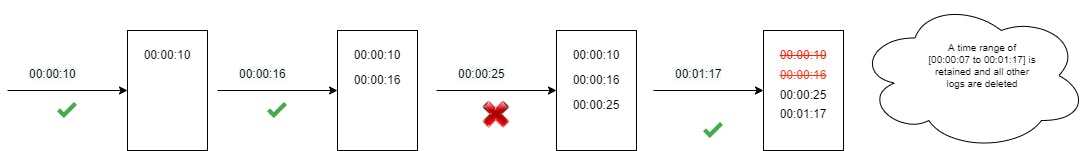

Fixed window algorithm allows more requests to go through at the edge of a window. Sliding window log fixes the issue. In sliding window log the window boundary is dynamic instead of fixed. This algorithm keeps a log of request timestamp for each user. When a new request comes in, remove all outdated timestamps. Then we decide whether this request should be processed depending on whether the log size has exceeded the limit. For example, suppose the rate limit is 2 requests per minute:

Sliding window log algorithm provides a more accurate rate limit because the window boundary is dynamic instead of fixed.

The disadvantage of this approach is its memory footprint. even if a request is rejected, its request time is recorded in the log.

Sliding window counter algorithm

The sliding window counter algorithm is a hybrid approach that combines the fixed window counter and sliding window log.

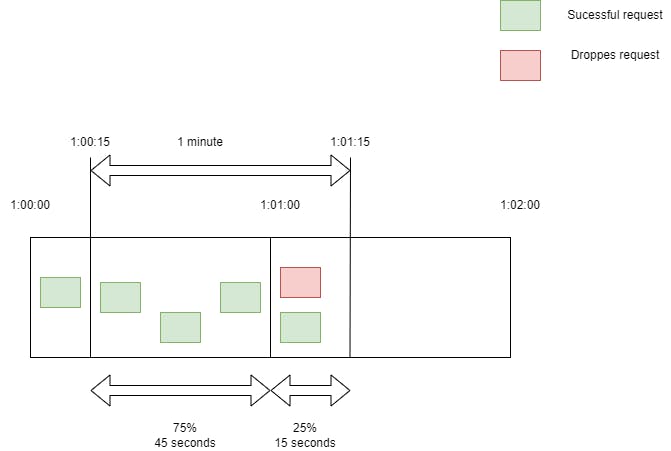

For example, suppose the rate limit is 4 requests per minute:

There are 4 requests in window [1:00:00, 1:01:00) and 2 requests in window [1:01:00, 1:02:00). For 2 requests that arrive at 1:01:15, which is at 25% position of the window [1:01:00, 1:02:00), we calculate the request count by the formula = previous counter * ((time unit - time into the current counter) / time unit) + current counter

3 x (1 - 25%) + 2 = 4.25 > 4. Thus we reject this request.